Performance demands of latency-sensitive applications have long been thought to be incompatible with virtualization. Such applications as distributed in-memory data management, stock trading, and high performance computing (HPC) demand very low latency or jitter, typically of the order of up to tens of microseconds.

Although virtualization brings the benefits of simplifying IT management and saving costs, the benefits come with an inherent overhead due to abstracting physical hardware and resources and sharing them.

Although virtualization brings the benefits of simplifying IT management and saving costs, the benefits come with an inherent overhead due to abstracting physical hardware and resources and sharing them.

Virtualization overhead may incur increased processing time and its variability. VMware vSphere ensures that this overhead induced by virtualization is minimized so that it is not noticeable for a wide range of applications including most business critical applications such as database systems, Web applications, and messaging systems.

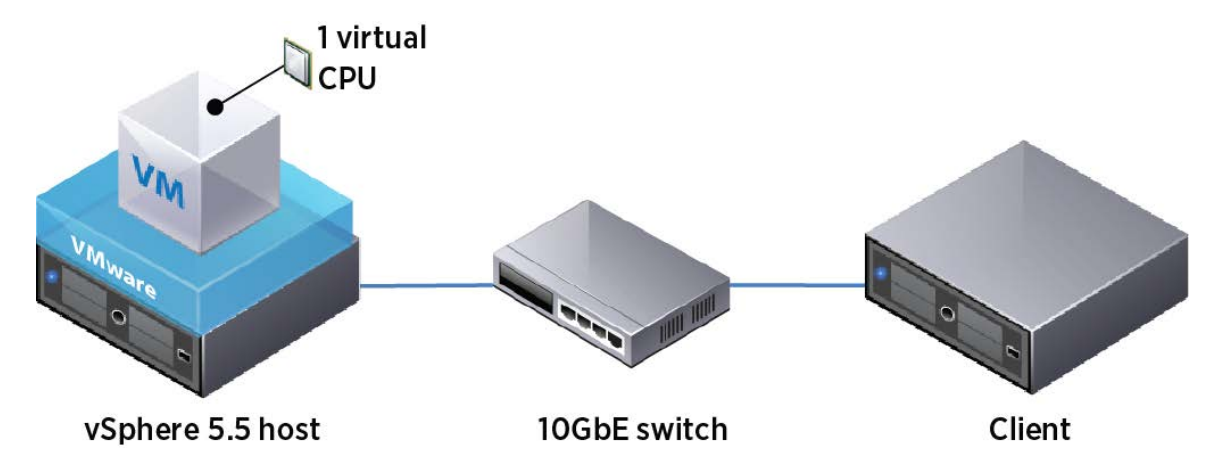

vSphere also supports well applications with millisecond-level latency constraints such as VoIP streaming applications. However, certain applications that are extremely latency-sensitive would still be affected by the overhead due to strict latency requirements. In order to support virtual machines with strict latency requirements, vSphere 5.5 introduces a new per-VM feature called Latency Sensitivity.

Among other optimizations, this feature allows virtual machines to exclusively own physical cores, thus avoiding overhead related to CPU scheduling and contention. Combined with a pass through functionality, which bypasses the network virtualization layer, applications can achieve near-native performance in both response time and jitter.

Briefly, this paper presents the following:

- It explains major sources of latency increase due to virtualization, which are divided into two categories: contention created by sharing resources and overhead due to the extra layers of processing for virtualization.

- It presents details of the latency-sensitivity feature that improves performance in terms of both response time and jitter by eliminating the major sources of extra latency added by using virtualization.

- It presents evaluation results demonstrating that the latency-sensitivity feature combined with pass-through mechanisms considerably reduces both median response time and jitter compared to the default configuration, achieving near-native performance.

- It discusses the side effects of using the latency-sensitivity feature and presents best practices